Last week for me was all about getting familiar with Stable Diffusion, starting to see if my familiarity with paid tools like Midjourney could translate over to the open source tools that will let me get more fine-grained control of the image and video creation process.

This week, I got down to business with video. Generative AI video is certainly in its pre-infancy, so while the image generation tools are becoming professional grade (as we’ve seen with Adobe’s entry into the space), video is still very rough to work with and get good results. I have a whole process around video production in mind, which I’m gradually chipping away at – but the tl;dr is that I think text-to-video will hit limitations, whereas video-to-video could have more immediate applications.

It’s also a great opportunity to get to the innovative edge of the field, which is what I’m seeking to do, and I think I managed a small improvement to a process already, which I’ll get into below.

New tools in the toolbelt

First, let’s cover some of the new tools & techniques I’ve learned:

TemporalKit – I’ve been researching a few different techniques for video generation. Based on the trade-offs, this felt like one of the best options for creating video. Especially in video-to-video. This is also where I think I’ve developed a way to improve the process.

Wav2Lip – This is a great tool for adding lip-syncing to videos. The video-to-video process often removes mouth movements, so even if the original video had clearly spoken audio, I’ve needed to use this to fix the mouths with pretty good results.

I had some fun with the tools I’ve learned so far and made a meme for my friend Jimmy:

Improving TemporalKit

Background

TemporalKit is a great tool developed by Ciara Rowles. It packages up the technique originally showcased by TokyoJab, who realized that putting keyframes of a video in a grid will produce a result where the images in the grid remain more similar to each other than if you rendered them frame by frame.

Stable Diffusion seems to want to maintain the similarity of images in a grid.

Current issues

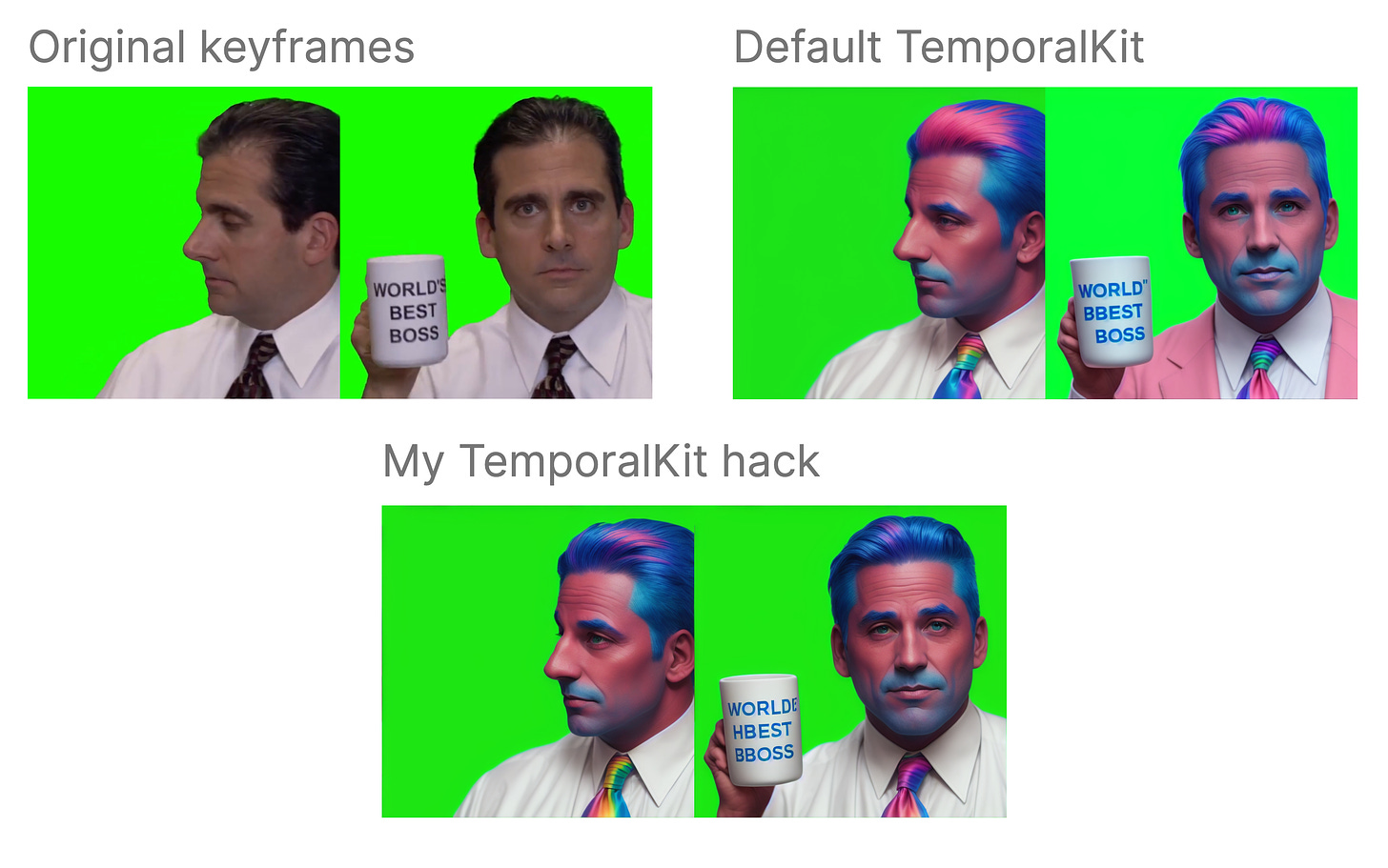

Aside from the size limitation (I can only process a 2048x2048 sized grid of images, so I tend to aim for keyframes at around 512x512, which is on the small side for video), but more importantly is the lack of consistency between grids of keyframes.

You can see in this example, the starting key frame and the ending keyframe are from different sets of keyframe grids. Stable Diffusion has shifted details such as adding a pink coat to the second group.

My technique: TemporalKit Hack

I’m still early in my journey to better understanding the diffusion algorithm at play here, so I’m not sure exactly why the grid method works at all – but something did strike me:

The frames in the grid would seem to exert an influence over each other in order to drive a similarity between them.

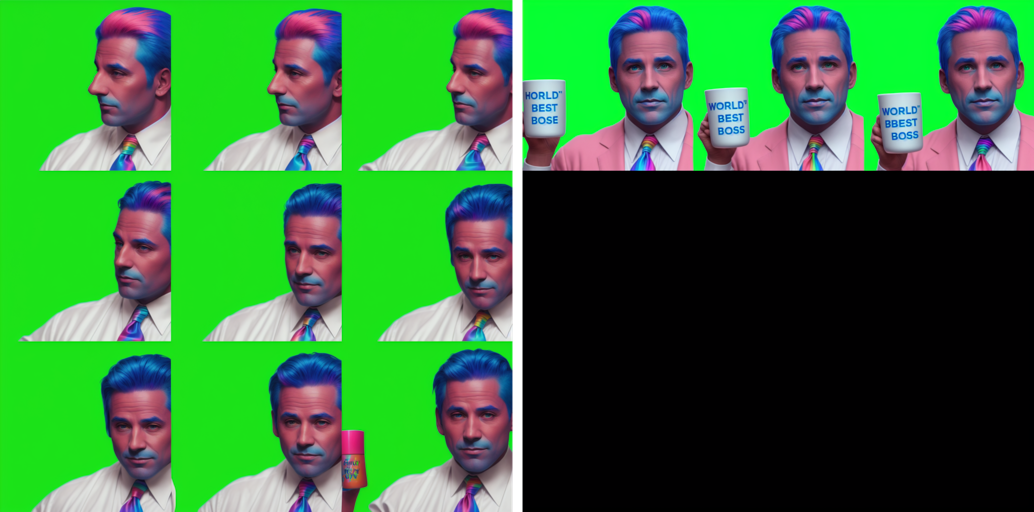

This led me to think – What if you only varied one of the frames within the grid and kept the others constant?

I made my own grid, choosing from a range of head poses to form the outer 8 keyframes of the grid template and then swapped out only one keyframe in the center:

Now, processing each of these grids, creates a 12 image batch to run vs a 2 image batch (glad to have an NVidia 4090 for this), but with much more consistent results:

It’s curious to note some of the subtle changes in the surrounding frames as well. I’m certainly planning to learn more about the diffusion algorithm to understand what is driving this.

Note on TemporalNet

I know that Ciara Rowles has a project called TemporalNet which I haven’t had a chance to experiment with – so it’s possible she’s already addressed some of the problems with TemporalKit here.

Final result

Here’s a video showing how it all comes together, along with experiments with another prompt & diffusion model. If this technique proves valuable (compared to TemporalNet), I’ll aim to script the process for longer videos, which would be untenable with the current manual process.

And of course, the pre-requisite Anime conversion…